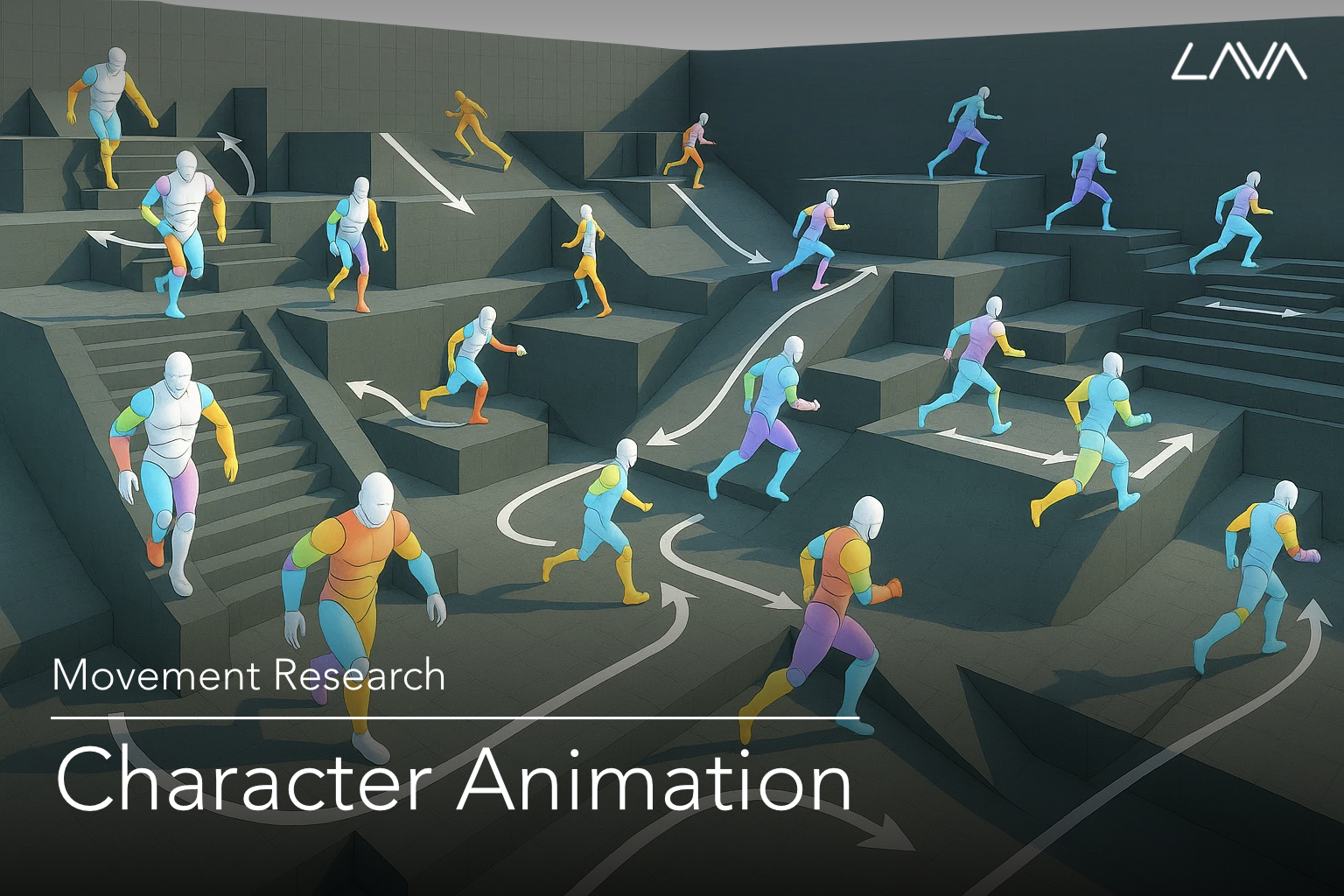

How can digital avatars move in ways that are realistic, adaptable, and purposeful to meet users’ demands? Character Animation research aims to develop learning-based methodologies that go beyond conventional animation techniques such as keyframing or motion capture. Our approach enables avatars to respond dynamically within interactive environments while remaining easily controllable by users. This research has wide applications in gaming, immersive virtual worlds, and digital media production, where interactive and expressive character motion is essential.

We explore methods to tackle motion generation, editing, retargeting, control, and planning. Our systems empower avatars to exhibit natural movements in dynamic environments, express diverse stylistic nuances, and execute user-driven actions seamlessly. We build frameworks that integrate large-scale motion data, generative models, and physics-based simulation into cohesive learning-based methodologies. This foundational work paves the way for developing immersive and intelligent experiences with virtual avatars.

Recent papers

- InterFaceRays: Interaction-Oriented Furniture Surface Representation for Human Pose Retargeting, T. Jin, Y. Lee, and S-H Lee, Computer Graphics Forum (Proc. Eurographics 2025), Feb. 2025 [Project]

- MOCHA: Real-time Motion Characterization via Context Matching, D-K Jang, Y. Ye, J. Won, and S-H Lee, ACM SIGGRAPH ASIA 2023, Dec. 2023 [project]

- DAFNet: Generating Diverse Actions for Furniture Interaction by Learning Conditional Pose Distribution, T. Jin and S-H Lee, Computer Graphics Forum (Proc. Pacific Graphics), Sep. 2023 [project]

- Motion Puzzle: Arbitrary Motion Style Transfer by Body Part, D-K Jang, S. Park, and S-H Lee, ACM Transactions on Graphics (TOG), Jan. 2022 [project]

- Diverse Motion Stylization for Multiple Style Domains via Spatial-Temporal Graph-Based Generative Model, S. Park, D-K Jang, and S-H Lee, The ACM SIGGRAPH / Eurographics Symposium on Computer Animation (SCA), Jun. 2021

- Constructing Human Motion Manifold with Sequential Networks, D-K Jang and S-H Lee, Computer Graphics Forum, May. 2020 [project]

- Aura Mesh: Motion Retargeting to Preserve the Spatial Relationships between Skinned Characters, T. Jin, M. Kim, and S-H Lee, Computer Graphics Forum ((Proc. Pacific Graphics), May 2018 [project]

- Multi-Contact Locomotion Using a Contact Graph with Feasibility Predictors, C. Kang and S-H Lee, ACM Transactions on Graphics, 36(2), 22:1-14, April, 2017 (to be presented at SIGGRAPH 2017) [project]